VxWorks 653

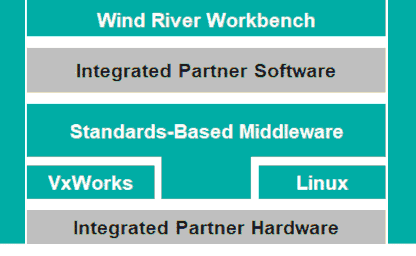

VxWorks® 653 is an integrated modular avionics (IMA) platform enabling workload consolidation of safety-critical and less-critical applications.

VxWorks® 653 is an integrated modular avionics (IMA) platform enabling workload consolidation of safety-critical and less-critical applications.

VxWorks Cert Edition is a platform for safety-critical applications that require DO-178C, IEC 61508, IEC 62304, or ISO 26262 certification evidence.

Workbench is an integrated development environment (IDE) that supports the construction of VxWorks projects.

The latest release of VxWorks support OCI containers, you can use traditional IT-like technologies to develop and deploy intelligent edge software better and faster.

A real-time solution for mission critical problems. When you need your embedded system to work, Wind River is here.

VxWorks is a robust operating system; it has many useful and powerful features for you to use in your project.

Workbench is an integrated development environment (IDE) that supports the construction of VxWorks projects.

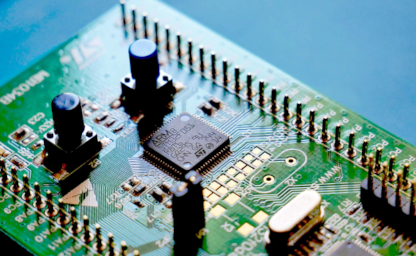

All VxWorks projects are different, but they all require hardware configuration.

VxWorks supports a variety of boot strategies, many of which are board specific.

Wind River, along with other developers, have been working on an open-source project for establishing connection with an embedded system.

Simulators allow for the target board to be recreated in software, giving real results to what the board would give.

Targets and connections are the hardware side of a VxWorks project. The software side are the VxWorks project types and workspaces.

File hygiene is important on any project, so it is important to know where everything is.

The center of every VxWorks project is the VxWorks source build and image projects.

VxWorks provides the vxprj utility to create, manage, and build VxWorks projects.

ROMFS stands for read-only memory file system. The ROMFS sits on the top of the VxWorks memory layout.

Configuring and setting up the VSB and VIP is known as platform development.

The kernel shell is a powerful tool that can be used for development, debugging, and deployment of a VxWorks project.

The kernel shell supports the VI editor as its primary editor and emacs as a secondary editor.

The kernel shell configuration variables control various settings in a shell session.

The kernel shell can use two modes: the C interpreter and the command interpreter.

Visit Wind River to learn more about Wind River Learning Subscription, Instructor-Led Training, and Custom Training.